|

|

|

|

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

I was trying to find a way to decrease the lights at the top. The only way

My code could be changed for that is to randomly remove lights at an

increasing rate as the angle moves closer to the top. This is not a good

solution.

With the adition of the sub sampling your code looks like it is better. This

could be done by sampling at 2 or more levels deeper in subdivision but

placing lights at the undivided level. Also I added a photon statment to

see what that would be like. I am still working with the setting to try to

get it right.

"Trevor G Quayle" <Tin### [at] hotmail com> wrote:

> Nice

> From what I can gather from your code, is the light spacing based on the x-y

> coordinates of the un-wrapped HDRI? This may cause you problems with lights

> close to the poles of the image as you would tend to get a higher density of

> lights in these areas, which is why I tried to do a geodesic dome

> configuration, to try to get a more even sampling around the dome. I do

> like the supersampling idea, which I didn't try implementing. One thing

> that you can do to help with the light brightness, is do 2 identical passes

> so you can get a light count on the first one and adjust the lights

> accordingly. BTW, I see you did get it macroed after all. I would like to

> figure out some way to get better adaptive sampling. With larger sampling

> spacing, you can miss smaller light sources and with finer sample spacing

> you can get too many lights, depending on the threshold level. The random

> super sampling probably helps a bit with this.

> My macro if you are interested:

>

> //start

> #macro MapLight(n,R,Map,SS,BR,TH, LI)

> #declare PIMAGE = function {pigment{image_map {hdr Map}}}

> union{

> #if (SS)

> sphere{0 R*1.01

> pigment {image_map {hdr MAP once interpolate 2 map_type 1} scale

> <1,1,-1>}

> finish {ambient BR diffuse 0}

> }

> #end

> #declare nL=pow(2,(n-1));

> #declare numL=0;

> #declare MaxB=0;

> #declare k=0; #while (k<=1)

> #declare i=-nL; #while (i<=nL)

> #declare nS=4*(nL-abs(i));

> #declare j=0; #while (j<=nS)

> #if (nS=0)

> #declare xp=0;

> #else

> #declare xp=2*j/nS-1;

> #end

> #declare yp=i/nL/2;

> #declare COL = PIMAGE(xp/2+0.5,yp+0.5,0);

>

> #if (COL.gray > MaxB) #declare MaxB=COL.gray; #end

>

> #if (COL.red>TH|COL.green>TH|COL.blue>TH)

> #if (k=0)

> #declare numL=numL+1;

> #else

> light_source{0 color (COL/numL)*LI fade_power 2

> fade_distance R translate<0,0,-R> rotate x*i*90/nL rotate y*j*360/nS rotate

> y*270}

> #end

> #end

> #declare j=j+1; #end

> #declare i=i+1; #end

> #declare k=k+1; #end

> #debug concat("NumLights:",str(numL,0,0),"/",str(2+pow(2,2*n),0,0),"

> (",str(numL/(2+pow(2,2*n))*100,0,1),"%)n")

> #debug concat("MaxB-(",str(MaxB,0,3),")n")

> }

> #end

> //end

>

> I attached a few more scnes samples as well.

> In the last one, I removed the HDRI sky sphere and used visible light

> sources instead to be able to see the distribution. com> wrote:

> Nice

> From what I can gather from your code, is the light spacing based on the x-y

> coordinates of the un-wrapped HDRI? This may cause you problems with lights

> close to the poles of the image as you would tend to get a higher density of

> lights in these areas, which is why I tried to do a geodesic dome

> configuration, to try to get a more even sampling around the dome. I do

> like the supersampling idea, which I didn't try implementing. One thing

> that you can do to help with the light brightness, is do 2 identical passes

> so you can get a light count on the first one and adjust the lights

> accordingly. BTW, I see you did get it macroed after all. I would like to

> figure out some way to get better adaptive sampling. With larger sampling

> spacing, you can miss smaller light sources and with finer sample spacing

> you can get too many lights, depending on the threshold level. The random

> super sampling probably helps a bit with this.

> My macro if you are interested:

>

> //start

> #macro MapLight(n,R,Map,SS,BR,TH, LI)

> #declare PIMAGE = function {pigment{image_map {hdr Map}}}

> union{

> #if (SS)

> sphere{0 R*1.01

> pigment {image_map {hdr MAP once interpolate 2 map_type 1} scale

> <1,1,-1>}

> finish {ambient BR diffuse 0}

> }

> #end

> #declare nL=pow(2,(n-1));

> #declare numL=0;

> #declare MaxB=0;

> #declare k=0; #while (k<=1)

> #declare i=-nL; #while (i<=nL)

> #declare nS=4*(nL-abs(i));

> #declare j=0; #while (j<=nS)

> #if (nS=0)

> #declare xp=0;

> #else

> #declare xp=2*j/nS-1;

> #end

> #declare yp=i/nL/2;

> #declare COL = PIMAGE(xp/2+0.5,yp+0.5,0);

>

> #if (COL.gray > MaxB) #declare MaxB=COL.gray; #end

>

> #if (COL.red>TH|COL.green>TH|COL.blue>TH)

> #if (k=0)

> #declare numL=numL+1;

> #else

> light_source{0 color (COL/numL)*LI fade_power 2

> fade_distance R translate<0,0,-R> rotate x*i*90/nL rotate y*j*360/nS rotate

> y*270}

> #end

> #end

> #declare j=j+1; #end

> #declare i=i+1; #end

> #declare k=k+1; #end

> #debug concat("NumLights:",str(numL,0,0),"/",str(2+pow(2,2*n),0,0),"

> (",str(numL/(2+pow(2,2*n))*100,0,1),"%)n")

> #debug concat("MaxB-(",str(MaxB,0,3),")n")

> }

> #end

> //end

>

> I attached a few more scnes samples as well.

> In the last one, I removed the HDRI sky sphere and used visible light

> sources instead to be able to see the distribution.

Post a reply to this message

Attachments:

Download 'building.jpg' (17 KB)

Preview of image 'building.jpg'

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

I'm in the process of trying to implement subsampling and seeing what works

best. Not sure whether its better to go with a random sampling or a fixed

subdivision. I have added a type of adaptive subsampling that adds

additional lights, this is done in a fixed rectangular subdivision of each

vertex and its tributary area. I'll post updated code when I get things a

bit more refined.

Ads a note for your routine, you may want to fix your subsamplig averaging

method (unless it's meant to work as you've made it). The way you've done

it (ie iteratively adding a number then dividing by 2) doesn't give a true

average. For example, for the series (7,5,3,1,224) you get 10.1, whereas

the average is 7.5. You method gives more weighting to the numbers towrds

the end of the series. If you want to change this:

Instead of:

#while (CNT < SSAMP)

#local COL = (COL + PIMAGE(X+(rand(S)*SP),Y+(rand(S)*(SP)),0))/2;

#local CNT = CNT + 1;

#end

Try:

#while (CNT < SSAMP)

#local COL = (COL + PIMAGE(X+(rand(S)*SP),Y+(rand(S)*(SP)),0));

#local CNT = CNT + 1;

#end

#local COL = COL/(SSAMP+1);

I'm looking forward to seeing more of your progress.

PS: I haven't tried adding photons, radiosity or AA yet as I'm not very

patient at seeing results...

-tgq

Post a reply to this message

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

"Trevor G Quayle" <Tin### [at] hotmail com> wrote:

> I'm in the process of trying to implement subsampling and seeing what works

> best. Not sure whether its better to go with a random sampling or a fixed

> subdivision. I have added a type of adaptive subsampling that adds

> additional lights, this is done in a fixed rectangular subdivision of each

> vertex and its tributary area. I'll post updated code when I get things a

> bit more refined.

>

> Ads a note for your routine, you may want to fix your subsamplig averaging

> method (unless it's meant to work as you've made it). The way you've done

> it (ie iteratively adding a number then dividing by 2) doesn't give a true

> average. For example, for the series (7,5,3,1,224) you get 10.1, whereas

> the average is 7.5. You method gives more weighting to the numbers towrds

> the end of the series. If you want to change this:

>

> Instead of:

> #while (CNT < SSAMP)

> #local COL = (COL + PIMAGE(X+(rand(S)*SP),Y+(rand(S)*(SP)),0))/2;

> #local CNT = CNT + 1;

> #end

>

> Try:

> #while (CNT < SSAMP)

> #local COL = (COL + PIMAGE(X+(rand(S)*SP),Y+(rand(S)*(SP)),0));

> #local CNT = CNT + 1;

> #end

> #local COL = COL/(SSAMP+1);

>

>

> I'm looking forward to seeing more of your progress.

>

> PS: I haven't tried adding photons, radiosity or AA yet as I'm not very

> patient at seeing results...

>

> -tgq

I was just about to try a new method of placing the lights using:

#macro PSURFACE1(PS,U,V)

// Evaluates the Bezier triangle at (u,v).

{

#local w = 1 - U - V;

#local u2 = U * U;

#local v2 = V * V;

#local w2 = w * w;

(w2*PSX[0] + (2*U*w)*PSX[1] + u2*PSX[2] + (2*U*V)*PSX[3] + v2*PSX[4] +

(2*V*w)*PSX[5]);

#end

Four of these placed around a hemisphere should produce the proper spacing.

Sub sampling would take place by setting a total sampling value then using

another value to set at what level in the total samplings the lights should

be placed. This will also make the total number of lights easier to get (I

think).

I have not started yet but will soon.

I did make the change to my averaging code you provided above. I had not

even considered that it would be different than a standard average. This

shows my lack in critical math knowledge. com> wrote:

> I'm in the process of trying to implement subsampling and seeing what works

> best. Not sure whether its better to go with a random sampling or a fixed

> subdivision. I have added a type of adaptive subsampling that adds

> additional lights, this is done in a fixed rectangular subdivision of each

> vertex and its tributary area. I'll post updated code when I get things a

> bit more refined.

>

> Ads a note for your routine, you may want to fix your subsamplig averaging

> method (unless it's meant to work as you've made it). The way you've done

> it (ie iteratively adding a number then dividing by 2) doesn't give a true

> average. For example, for the series (7,5,3,1,224) you get 10.1, whereas

> the average is 7.5. You method gives more weighting to the numbers towrds

> the end of the series. If you want to change this:

>

> Instead of:

> #while (CNT < SSAMP)

> #local COL = (COL + PIMAGE(X+(rand(S)*SP),Y+(rand(S)*(SP)),0))/2;

> #local CNT = CNT + 1;

> #end

>

> Try:

> #while (CNT < SSAMP)

> #local COL = (COL + PIMAGE(X+(rand(S)*SP),Y+(rand(S)*(SP)),0));

> #local CNT = CNT + 1;

> #end

> #local COL = COL/(SSAMP+1);

>

>

> I'm looking forward to seeing more of your progress.

>

> PS: I haven't tried adding photons, radiosity or AA yet as I'm not very

> patient at seeing results...

>

> -tgq

I was just about to try a new method of placing the lights using:

#macro PSURFACE1(PS,U,V)

// Evaluates the Bezier triangle at (u,v).

{

#local w = 1 - U - V;

#local u2 = U * U;

#local v2 = V * V;

#local w2 = w * w;

(w2*PSX[0] + (2*U*w)*PSX[1] + u2*PSX[2] + (2*U*V)*PSX[3] + v2*PSX[4] +

(2*V*w)*PSX[5]);

#end

Four of these placed around a hemisphere should produce the proper spacing.

Sub sampling would take place by setting a total sampling value then using

another value to set at what level in the total samplings the lights should

be placed. This will also make the total number of lights easier to get (I

think).

I have not started yet but will soon.

I did make the change to my averaging code you provided above. I had not

even considered that it would be different than a standard average. This

shows my lack in critical math knowledge.

Post a reply to this message

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

"Trevor G Quayle" <Tin### [at] hotmail com> wrote:

> I got interested in m1j's post about "dome lighting" based on HDRI maps

>

(http://news.povray.org/povray.binaries.images/thread/%3Cweb.43912795ae23e4252f222b3b0%40news.povray.org%3E/)

> so I had a try at it (using my own code however, based on a geodesic dome

> macro I wrote many years ago) with impressive results.

>

> HDRI2a.jpg shows a scene using HDRI with radiosity lighting (medium-low

> quality settings though)

> HDRI2c.jpg shows the same scene using the dome lighting based on the HDRI

> (with a threshold limit)

> the rest use the dome lighting using different HDRI maps. As a note, each

> took on average 10mins to render (no AA or rad)

>

> -tgq

A great example of photons! com> wrote:

> I got interested in m1j's post about "dome lighting" based on HDRI maps

>

(http://news.povray.org/povray.binaries.images/thread/%3Cweb.43912795ae23e4252f222b3b0%40news.povray.org%3E/)

> so I had a try at it (using my own code however, based on a geodesic dome

> macro I wrote many years ago) with impressive results.

>

> HDRI2a.jpg shows a scene using HDRI with radiosity lighting (medium-low

> quality settings though)

> HDRI2c.jpg shows the same scene using the dome lighting based on the HDRI

> (with a threshold limit)

> the rest use the dome lighting using different HDRI maps. As a note, each

> took on average 10mins to render (no AA or rad)

>

> -tgq

A great example of photons!

Post a reply to this message

Attachments:

Download 'building_g.jpg' (20 KB)

Preview of image 'building_g.jpg'

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

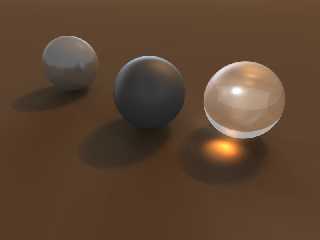

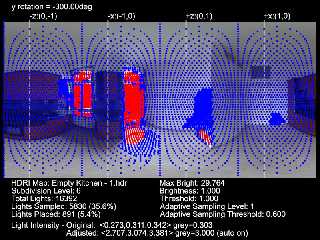

A sample from my latest work on this project. DomeLight.jpg is the actual

image. DomeLightEval.jpg is a secondary output from the macro that allows

the scene and adjustments to be pre-evaluated. The blue and red dots

represent where samples are taken, with red being above the threshold and

getting a light, and blue being below with no light. You can see in certain

patches where the dots are much denser, this results from the adaptive

sampling option. I will post the new code later when I'm a bit more

satisfied with it.

I ended up not doing subsampling for evaluating light sources as I found

that more often it would steal lights away than adding additional lights to

the scene. I may end up adding a small scale subsampling only to average

the light colour/intensity of lightsources without adding or removing light

sources though if I like the looks of it.

-tgq

Post a reply to this message

Attachments:

Download 'DomeLight.jpg' (38 KB)

Download 'DomeLightEval.jpg' (169 KB)

Preview of image 'DomeLight.jpg'

Preview of image 'DomeLightEval.jpg'

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

Wow

I am really impressed with the quality of the images you are producing

with this technique. I have been doing a few google searches on

geodesic dome construction before attempting to code anything myself.

This is something I definately want to persue though once I have some

basic understanding. Its been a while since I coded anything in POV so

I hope this spurs me on to creating something just as wonderful.

Quick question though. I understand that the brightness of the lights

is adjusted based on the sample you take from the HDR image. Do you

also sample the colour and adjust the light source colour based on this?

Keep up the GREAT work.

Dave.

Post a reply to this message

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

"Trevor G Quayle" <Tin### [at] hotmail com> wrote:

> A sample from my latest work on this project. DomeLight.jpg is the actual

> image. DomeLightEval.jpg is a secondary output from the macro that allows

> the scene and adjustments to be pre-evaluated. The blue and red dots

> represent where samples are taken, with red being above the threshold and

> getting a light, and blue being below with no light. You can see in certain

> patches where the dots are much denser, this results from the adaptive

> sampling option. I will post the new code later when I'm a bit more

> satisfied with it.

> I ended up not doing subsampling for evaluating light sources as I found

> that more often it would steal lights away than adding additional lights to

> the scene. I may end up adding a small scale subsampling only to average

> the light colour/intensity of lightsources without adding or removing light

> sources though if I like the looks of it.

>

> -tgq

Here are the last two sets of code I tried. Without photons My renders range

from 4 to 15 minutes. With photons it gets pushed closer to 30 to 50

minutes.

I am sure I could still improve this but I like your results and my use your

code instead.

#macro PSURFACE2(PS,U,V)

#local W = 1 - U - V;

#local u2 = U * U;

#local u3 = u2 * U;

#local v2 = V * V;

#local v3 = v2 * V;

#local w2 = W * W;

#local w3 = w2 * W;

(w3*PS[0] + (3*U*w2)*PS[1] + (3*u2*W)*PS[2] + u3*PS[3] + (3*w2*V)*PS[4]

+ (6*W*U*V)*PS[5] + (3*u2*V)*PS[6] + (3*W*v2)*PS[7] + (3*v2*U)*PS[8] +

v3*PS[9])

#end

//light dome from image.

#macro

DOMELITE2(SP,YANGLE,MULT,CLIPV,THRES,LSAMPL,RAD,AREA,AS,LV,FNAME,FTYPE)

#switch(FTYPE )

#case(0)

#local PIMAGE = function {pigment{image_map {jpeg FNAME}}}//grab image

#break

#case(1)

#local PIMAGE = function {pigment{image_map {png FNAME}}}//grab image

#break

#case(2)

#version unofficial megapov 1.21;

#local PIMAGE = function {pigment{image_map {hdr FNAME}}}//grab image

#break

#end

// #local PS = array[10]

{<0,RAD,0>,<RAD*0.6,RAD,0>,<RAD,RAD*0.6,0>,<RAD,0,0>,<0,RAD,RAD*0.6>,<RAD*0.6,RAD*0.6,RAD*0.6>,<RAD,0,RAD*0.6>,<0,RAD*0

.6,RAD>,<RAD*0.6,0,RAD>,<0,0,RAD>};

#local PS = array[10]

{<0,0,RAD>,<0,RAD*0.6,RAD>,<0,RAD,RAD*0.6>,<0,RAD,0>,<RAD*0.6,0,RAD>,<RAD*0.6,RAD*0.6,RAD*0.6>,<RAD*0.6,RAD,0>,<RAD,0,R

AD*0.6>,<RAD,RAD*0.6,0>,<RAD,0,0>};

/* 1 2 3 4

5 6 7 8

9 10*/

#local Q = 0;

#while(Q < 4)

#local U = 0;

#while(U < 1-(1/SP))

#local V = 0;

#while(V < 1-U-(1/SP)/2)

#local LLOC = PSURFACE2(PS,V,U);

#local COL = PIMAGE(U/4+Q/4,V,0);///4+Q/4

light_source{LLOC color (COL*MULT)

#if(LV) looks_like{sphere{LLOC,RAD/SP pigment{color COL}

finish{ambient 1}}} #end

#if(AREA) area_light <AS, 0, 0> <0, 0, AS> 4, 4 adaptive 1

jitter circular orient #end

#if(COL.gray > 0.5) photons {refraction on reflection on} #end

rotate y*(Q*90)

}

#local V = V + 1/SP;

#end

#local U = U + 1/SP;

#end

#local Q = Q + 1;

#end

#end com> wrote:

> A sample from my latest work on this project. DomeLight.jpg is the actual

> image. DomeLightEval.jpg is a secondary output from the macro that allows

> the scene and adjustments to be pre-evaluated. The blue and red dots

> represent where samples are taken, with red being above the threshold and

> getting a light, and blue being below with no light. You can see in certain

> patches where the dots are much denser, this results from the adaptive

> sampling option. I will post the new code later when I'm a bit more

> satisfied with it.

> I ended up not doing subsampling for evaluating light sources as I found

> that more often it would steal lights away than adding additional lights to

> the scene. I may end up adding a small scale subsampling only to average

> the light colour/intensity of lightsources without adding or removing light

> sources though if I like the looks of it.

>

> -tgq

Here are the last two sets of code I tried. Without photons My renders range

from 4 to 15 minutes. With photons it gets pushed closer to 30 to 50

minutes.

I am sure I could still improve this but I like your results and my use your

code instead.

#macro PSURFACE2(PS,U,V)

#local W = 1 - U - V;

#local u2 = U * U;

#local u3 = u2 * U;

#local v2 = V * V;

#local v3 = v2 * V;

#local w2 = W * W;

#local w3 = w2 * W;

(w3*PS[0] + (3*U*w2)*PS[1] + (3*u2*W)*PS[2] + u3*PS[3] + (3*w2*V)*PS[4]

+ (6*W*U*V)*PS[5] + (3*u2*V)*PS[6] + (3*W*v2)*PS[7] + (3*v2*U)*PS[8] +

v3*PS[9])

#end

//light dome from image.

#macro

DOMELITE2(SP,YANGLE,MULT,CLIPV,THRES,LSAMPL,RAD,AREA,AS,LV,FNAME,FTYPE)

#switch(FTYPE )

#case(0)

#local PIMAGE = function {pigment{image_map {jpeg FNAME}}}//grab image

#break

#case(1)

#local PIMAGE = function {pigment{image_map {png FNAME}}}//grab image

#break

#case(2)

#version unofficial megapov 1.21;

#local PIMAGE = function {pigment{image_map {hdr FNAME}}}//grab image

#break

#end

// #local PS = array[10]

{<0,RAD,0>,<RAD*0.6,RAD,0>,<RAD,RAD*0.6,0>,<RAD,0,0>,<0,RAD,RAD*0.6>,<RAD*0.6,RAD*0.6,RAD*0.6>,<RAD,0,RAD*0.6>,<0,RAD*0

.6,RAD>,<RAD*0.6,0,RAD>,<0,0,RAD>};

#local PS = array[10]

{<0,0,RAD>,<0,RAD*0.6,RAD>,<0,RAD,RAD*0.6>,<0,RAD,0>,<RAD*0.6,0,RAD>,<RAD*0.6,RAD*0.6,RAD*0.6>,<RAD*0.6,RAD,0>,<RAD,0,R

AD*0.6>,<RAD,RAD*0.6,0>,<RAD,0,0>};

/* 1 2 3 4

5 6 7 8

9 10*/

#local Q = 0;

#while(Q < 4)

#local U = 0;

#while(U < 1-(1/SP))

#local V = 0;

#while(V < 1-U-(1/SP)/2)

#local LLOC = PSURFACE2(PS,V,U);

#local COL = PIMAGE(U/4+Q/4,V,0);///4+Q/4

light_source{LLOC color (COL*MULT)

#if(LV) looks_like{sphere{LLOC,RAD/SP pigment{color COL}

finish{ambient 1}}} #end

#if(AREA) area_light <AS, 0, 0> <0, 0, AS> 4, 4 adaptive 1

jitter circular orient #end

#if(COL.gray > 0.5) photons {refraction on reflection on} #end

rotate y*(Q*90)

}

#local V = V + 1/SP;

#end

#local U = U + 1/SP;

#end

#local Q = Q + 1;

#end

#end

Post a reply to this message

Attachments:

Download 'building_j.jpg' (26 KB)

Preview of image 'building_j.jpg'

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

Here is an example of the light distribution with my last set of code.

Post a reply to this message

Attachments:

Download 'dlite1.jpg' (78 KB)

Preview of image 'dlite1.jpg'

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

David Brickell <d.b### [at] nospam gmail gmail com> wrote:

> Wow

>

> I am really impressed with the quality of the images you are producing

> with this technique. I have been doing a few google searches on

> geodesic dome construction before attempting to code anything myself.

> This is something I definately want to persue though once I have some

> basic understanding. Its been a while since I coded anything in POV so

> I hope this spurs me on to creating something just as wonderful.

>

Thanks, not sure if its a true geodesic dome, I just created an algorithm

that subdivides a sphere into equal triangles to try to get an even spacing

> Quick question though. I understand that the brightness of the lights

> is adjusted based on the sample you take from the HDR image. Do you

> also sample the colour and adjust the light source colour based on this?

>

> Keep up the GREAT work.

Yes, the colour is sampled as well. So if there is a predominant colour in

the image, it will show up in the lighting

-tgq

>

> Dave.

-tgq com> wrote:

> Wow

>

> I am really impressed with the quality of the images you are producing

> with this technique. I have been doing a few google searches on

> geodesic dome construction before attempting to code anything myself.

> This is something I definately want to persue though once I have some

> basic understanding. Its been a while since I coded anything in POV so

> I hope this spurs me on to creating something just as wonderful.

>

Thanks, not sure if its a true geodesic dome, I just created an algorithm

that subdivides a sphere into equal triangles to try to get an even spacing

> Quick question though. I understand that the brightness of the lights

> is adjusted based on the sample you take from the HDR image. Do you

> also sample the colour and adjust the light source colour based on this?

>

> Keep up the GREAT work.

Yes, the colour is sampled as well. So if there is a predominant colour in

the image, it will show up in the lighting

-tgq

>

> Dave.

-tgq

Post a reply to this message

|

|

|  |

|  |

|

|

|

|

|  |

|  |

|

|

"m1j" <mik### [at] hotmail com> wrote:

> Here is an example of the light distribution with my last set of code.

Looks like a nice even distribution, you should get better results with that

-tgq com> wrote:

> Here is an example of the light distribution with my last set of code.

Looks like a nice even distribution, you should get better results with that

-tgq

Post a reply to this message

|

|

|  |

|  |

|

|

|

|

|  |

|

|

![]()