|

|

Regarding the AI scrapers ...

Just in the last week we've been hit badly by them - its been going on for longer, but

it really ramped up in the past 7 days - one of which was stuck stupidly scraping

every possible permutation of the wiki, particularly the special pages, which require

much more DB time than ordinary pages. jr alerted me to the fact the system load was

sitting at 4 (normally it idles at around 0.3) and when I investigated I found the

wiki being absolutely hammered. I did a "tail -f" on the logfile and it was scrolling

too fast to easily read. The bot in question is IMO badly written as it's not as if

MediaWiki structure is uncommon (looking at you, meta ... 1.5m requests to the wiki

alone, consuming 76 GB of HTML).

Not to be outdone, I also found the old bugs.povray.org site being hammered, again by

a bot scraping every possible permutation of the content (sorting, filtering, etc ...

looking at you, apple).

Between just those two instances, just in the last week, they managed to generate more

than 30gb of log files alone. The total bandwidth consumed is not known as I did not

have cloudflare enabled for the bugs site (it is now), but I did for the wiki and,

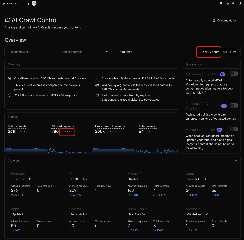

well, I'll let the screenshots do the talking.

I have turned on cloudflare's AI bot defense which has quieted things quite a bit (see

the final image, aftermath.png - it was taken the day after I turned the bot

protection on). However I cannot yet enable cloudflare on the main website (I have to

fix a bug in the login process that only occurs when it's turned on) so it's still

vulnerable (as is the news server).

Look, see and understand why I don't want them darn AI bots finding something else to

chew on ...

Post a reply to this message

Attachments:

Download '76gb-75%.jpg' (168 KB)

Download 'metrics1-75%.png' (504 KB)

Download 'metrics2-75%.png' (418 KB)

Download 'aftermath-75%.png' (412 KB)

Preview of image '76gb-75%.jpg'

Preview of image 'metrics1-75%.png'

Preview of image 'metrics2-75%.png'

Preview of image 'aftermath-75%.png'

|

|

![]()